The ARM vs. x86 debate is no longer about raw clock speed; it’s about which architecture masters superior system-level efficiency, and ARM is taking a decisive lead.

- ARM’s advantage stems from its System-on-a-Chip (SoC) integration and unmatched performance-per-watt, allowing it to dominate mobile and aggressively expand into data centers and supercomputers.

- x86 maintains a stronghold in the legacy desktop and server market but faces fundamental challenges in power consumption and thermal management that require complex and costly engineering workarounds.

Recommendation: For tech enthusiasts and investors, the critical metric is no longer gigahertz alone but performance-per-watt and total cost of ownership. Evaluating technology through this lens reveals a clear architectural trajectory for the future.

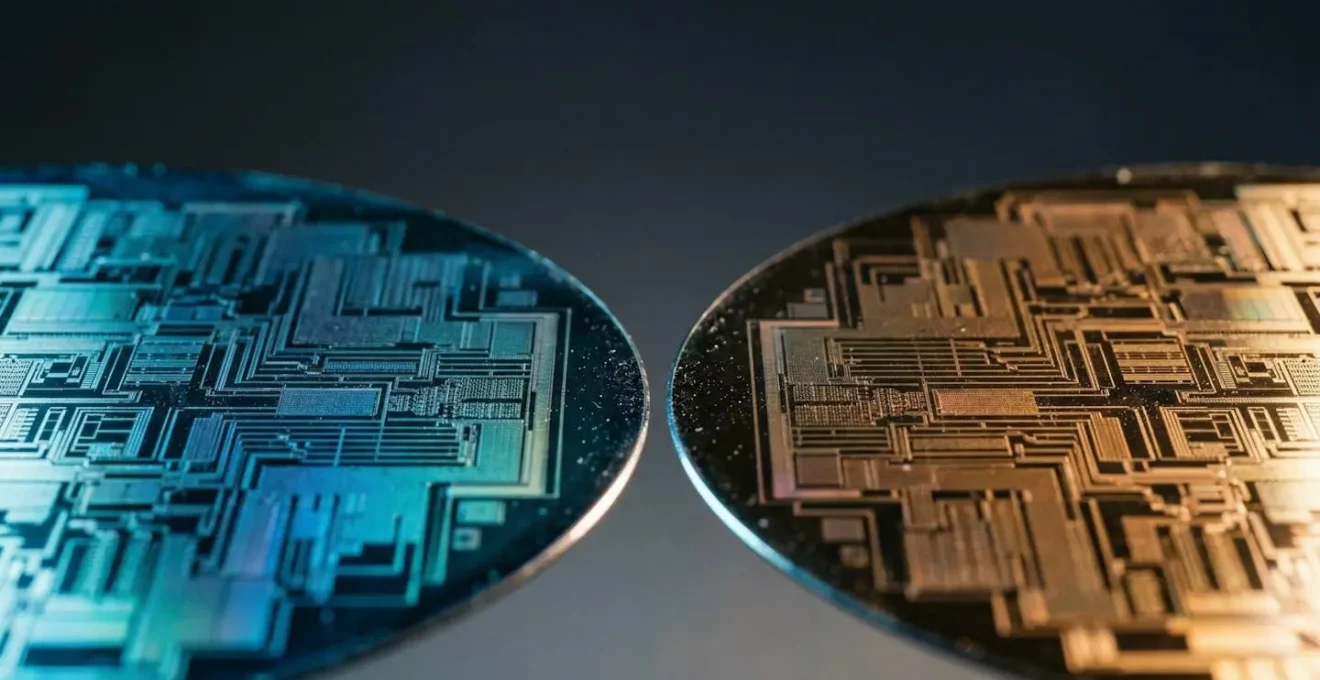

The faint whir of a laptop fan or the warmth spreading across its base isn’t just a minor annoyance; it’s a physical symptom of a deep-seated architectural war being waged at the heart of the tech industry. For decades, the battle lines seemed clearly drawn. The x86 architecture, championed by Intel and AMD, was the undisputed king of performance, powering everything from gaming desktops to massive data centers. In the other corner, ARM reigned over the mobile world, its DNA coded for power efficiency and long battery life. The common narrative was a simple trade-off: brute force versus stamina, CISC versus RISC.

This comfortable dichotomy is now obsolete. The success of Apple’s M-series silicon was not an anomaly but a declaration that the rules have changed. The conversation has evolved beyond instruction sets. The real fight for the future of computing is no longer about raw clock speed but about intelligent, integrated system-level efficiency. It’s a paradigm shift from a philosophy of brute force to one of holistic design, where power management, thermal headroom, and security are not afterthoughts but core tenets of the architecture itself.

But if the key isn’t just the instruction set, what are the real battlegrounds? This analysis will move beyond the surface-level debate. We will dissect the critical technological frontiers—from the atomic scale of 3nm process nodes to the macroeconomic implications in cloud data centers—to reveal the underlying forces at play. By examining how each architecture handles power, thermals, heterogeneous computing, and security, we can build a clear, prospective view of which is truly poised to dominate the next era of computing.

To fully grasp the strategic shifts underway, this article delves into the specific technological arenas where the competition between ARM and x86 is most fierce. The following sections explore these key battlegrounds, providing a comprehensive analysis of the strengths, weaknesses, and future trajectory of each architecture.

Summary: ARM vs x86: The New Battlegrounds for Computing Supremacy

- Why Does Moving to 3nm Processes Matter for Your Laptop’s Battery?

- How to Apply Thermal Paste Correctly to Drop CPU Temps by 5°C?

- Single Core vs Multi-Core: What Actually Matters for Gaming?

- The VRM Mistake That Cripples Your High-End Processor’s Performance

- How to Safely Overclock Your CPU Without Voiding the Warranty?

- Classical Supercomputers vs Quantum: Which Wins for Drug Discovery?

- Xbox Cloud vs Stadia Tech: Which Streaming Architecture Wins?

- When Will Quantum Computers Break Current Encryption Standards?

Why Does Moving to 3nm Processes Matter for Your Laptop’s Battery?

The move to smaller manufacturing processes like TSMC’s 3-nanometer (3nm) node is far more than a marketing bullet point; it is a fundamental enabler of the new computing paradigm. At this microscopic scale, transistors become smaller, faster, and, most importantly, more power-efficient. This isn’t an incremental improvement; it’s a leap. For a laptop user, this translates directly into a device that can run faster and longer on a single charge. For instance, initial data on 3nm nodes shows a staggering potential for up to 35% less power consumption at the same performance level compared to the previous 5nm generation.

This is where the architectural philosophies of ARM and x86 diverge strategically. ARM architectures, designed from the ground up for low-power mobile environments, are positioned to extract maximum benefit from these process advancements. Their simpler, more streamlined design scales down with incredible efficiency. x86, with its complex instruction set and legacy of high-power design, also benefits, but the gains are often less profound relative to its higher baseline power draw. As one analyst noted regarding the race to secure this advanced manufacturing capacity:

Apple’s position as the most important foundry customer secures the leading edge node capacity. This means going forward, apple’s m-series of chips will be the ones to beat, whether it is power envelops, efficiency or brute force performance.

– Industry analyst, Quora discussion on TSMC 3nm node adoption

This relentless pursuit of process leadership by ARM champions like Apple is not just about making thinner laptops. It’s a strategic move to widen the performance-per-watt gap, creating a compounding advantage. By leading the charge on 3nm, the ARM ecosystem establishes a new baseline for efficiency that legacy x86 designs, with their higher thermal and power demands, find increasingly difficult to match. This battle is won not in a single benchmark but over hours of unplugged, high-performance use.

How to Apply Thermal Paste Correctly to Drop CPU Temps by 5°C?

The discussion of thermal paste might seem like a niche topic for PC builders, but it exposes a critical vulnerability in the traditional high-performance computing model dominated by x86. Thermal paste is a thermally conductive compound used to fill microscopic air gaps between a CPU’s heat spreader and its cooler, ensuring efficient heat transfer. Improper application can lead to thermal throttling, where the CPU intentionally reduces its clock speed to prevent overheating, crippling performance. This entire ritual highlights a core challenge for x86: managing the immense heat generated by its pursuit of raw clock speed.

This necessity for elaborate cooling solutions—from exotic thermal pastes to large air coolers and complex liquid cooling loops—is a direct consequence of an architecture that has historically prioritized brute-force performance over thermal headroom. The heat generated is a byproduct of the high power required to drive complex instructions at ever-increasing frequencies. For x86, performance is a constant negotiation with the laws of thermodynamics.

In contrast, the ARM architecture’s focus on performance-per-watt fundamentally changes this equation. Because ARM-based System-on-a-Chip (SoC) designs are inherently more power-efficient, they generate significantly less waste heat for a given task. This is why a MacBook Air can deliver sustained performance without a fan, a feat unthinkable for a comparable x86-based ultrabook. The “problem” of thermal paste application, while still relevant in high-end systems, is less of an existential threat and more of a fine-tuning exercise in the ARM world. The focus shifts from desperately evacuating heat to intelligently managing a much smaller thermal envelope.

Action Plan: Your Thermal Performance Audit

- Baseline Monitoring: Before making any changes, use monitoring software (like HWMonitor or Core Temp) to record your CPU’s idle and full-load temperatures over a 15-minute stress test. This is your baseline.

- Cooler and Fan Inspection: Physically inspect your CPU cooler for dust buildup. Check that all fans (case and CPU) are spinning correctly and are free of obstructions. Clogged fins are a primary cause of high temps.

- Thermal Paste Assessment: If your system is over 3 years old or you’ve removed the cooler, it’s time to re-apply paste. Check the existing paste pattern upon removal; a thin, even spread is ideal. Dry, cracked, or patchy paste must be replaced.

- Airflow Path Analysis: Map the airflow in your case. Ensure you have a clear path from intake fans (usually front/bottom) to exhaust fans (usually rear/top). Cable management isn’t just for looks; it’s critical for unobstructed airflow.

- Re-test and Compare: After cleaning and re-pasting, run the same 15-minute stress test. Compare the new full-load temperatures to your baseline. A 5°C or greater drop indicates a successful thermal overhaul.

Single Core vs Multi-Core: What Actually Matters for Gaming?

For years, the gaming community has debated the importance of single-core speed versus multi-core count. Historically, many games relied heavily on a single powerful “main thread,” making high-clock-speed x86 processors the clear winners. However, as game engines become more sophisticated and workloads more parallelized, the conversation is shifting. The answer is no longer “one or the other” but “how intelligently are all cores utilized?” This is where ARM’s architectural flexibility presents a disruptive advantage through heterogeneous computing.

Instead of a monolithic block of identical high-power cores, ARM champions a concept known as big.LITTLE technology. This approach, now a staple of modern SoCs, combines high-performance “big” cores with high-efficiency “LITTLE” cores on the same chip. As ARM’s own documentation states:

ARM big.LITTLE technology is a heterogeneous processing architecture that uses up to three types of processors. LITTLE processors are designed for maximum power efficiency, while big processors are designed to provide efficient, sustained compute performance.

– ARM Holdings, ARM official big.LITTLE technology documentation

This allows the system to dynamically assign tasks to the right tool for the job. Background OS processes, audio processing, and networking can be offloaded to the efficiency cores, leaving the powerful performance cores entirely dedicated to the critical game logic and rendering threads. This isn’t just theoretical; research demonstrates that heterogeneous approaches can yield a 21% performance improvement with 23% energy savings. For gaming, this means more stable frame rates and longer battery life on mobile devices. While Intel has introduced its own version with P-cores and E-cores, this heterogeneous philosophy is native to ARM’s DNA, giving it a mature head start in intelligent performance management.

The VRM Mistake That Cripples Your High-End Processor’s Performance

The Voltage Regulator Module (VRM) on a motherboard is an unsung hero of high-performance computing, responsible for delivering clean and stable power to the CPU. In the world of high-end x86 processors, skimping on motherboard VRM quality is a classic mistake. A weak VRM can overheat under load, failing to supply the massive amounts of power a top-tier Intel or AMD chip demands during boost cycles. This results in voltage drops and performance throttling, effectively kneecapping a premium processor. This dependency on external, high-specification components is a hallmark of the x86 ecosystem’s modular-but-complex design.

This entire problem space highlights the elegance and efficiency of the ARM SoC approach. In a typical ARM-based design, critical power management integrated circuits (PMICs) are part of the holistic System-on-a-Chip package. The power delivery is co-designed with the processor cores, memory, and other components, creating a tightly integrated and highly efficient system. There is less reliance on the “luck of the draw” of motherboard component selection because the most critical elements are already engineered to work together perfectly.

This integrated approach delivers tangible economic advantages, especially at scale. The cloud computing space provides a powerful example of this principle in action, where performance-per-dollar is the ultimate metric.

Case Study: The AWS Graviton Advantage

Amazon Web Services’ custom-designed Graviton processors, based on ARM architecture, have become a dominant force in cloud computing. A key reason is their superior price-performance. As highlighted in a detailed analysis, AWS Graviton3 processors deliver 40% better price-performance than comparable x86-based instances. This massive advantage isn’t just about core speed; it’s a direct result of the ARM model. The integrated power management within the Graviton SoC leads to lower energy consumption and cooling requirements across the data center, creating significant operational cost savings that are passed on to the customer. It’s a clear demonstration of how ARM’s system-level efficiency translates into a powerful economic moat.

The VRM challenge on consumer x86 platforms and the success of ARM-based Graviton in the cloud are two sides of the same coin. They reveal that the future of performance lies not just in a powerful core, but in the intelligence and efficiency of the entire system that supports it.

How to Safely Overclock Your CPU Without Voiding the Warranty?

The practice of overclocking—manually pushing a CPU beyond its factory-rated speeds—is deeply ingrained in the culture of x86 enthusiasts. It’s a hands-on pursuit of maximum performance, a tradition of tinkering and tweaking voltages and frequencies. For decades, it was the primary way to extract extra power from a system. However, this very practice is becoming a relic of a past paradigm, one that is being replaced by a more intelligent, automated approach championed by the ARM architecture.

The question of “how to safely overclock” is fundamentally an x86 question. It implies a static performance target that must be manually overridden. ARM architecture, by contrast, is built around a philosophy of dynamic, self-regulating performance. It’s less about a user setting a fixed, risky overclock and more about the chip itself intelligently maximizing its potential within its given thermal and power constraints.

ARM chips are designed for dynamic, algorithm-controlled ‘boost’ behavior that automatically maximizes performance when thermal headroom allows. This is a shift from manual user intervention to intelligent, self-regulating systems.

– ARM architecture analysis

This represents a profound shift from user-managed to system-managed performance. Modern ARM-based SoCs, like Apple’s M-series, don’t require the user to enter a BIOS and tinker with voltages. The silicon itself, guided by sophisticated algorithms, constantly assesses workload, temperature, and power availability to boost performance opportunistically and safely, far beyond what a manual overclock could sustainably achieve in a thin-and-light form factor. The future of performance isn’t about voiding your warranty for a 10% gain; it’s about an architecture smart enough to give you a 100% boost for the 10 seconds you actually need it, then immediately returning to an efficient state.

Classical Supercomputers vs Quantum: Which Wins for Drug Discovery?

While quantum computing holds immense promise for complex simulations like drug discovery, the most powerful *classical* supercomputers today are already tackling these problems. And in this high-performance computing (HPC) arena, a seismic shift is occurring: ARM is challenging and, in some cases, dethroning x86. For decades, data centers and supercomputers were the exclusive domain of x86 (primarily Intel’s Xeon), but the same performance-per-watt advantage that conquered mobile is now proving irresistible at the largest scale.

This trend isn’t new. Early research from over a decade ago already demonstrated that an ARM Cortex-A9 core was up to 8.7 times more energy efficient than a contemporary Xeon processor in web server workloads. While the performance gap was large then, it was a clear signal of ARM’s efficiency potential. Today, that potential has been fully realized. The most prominent example is the Fugaku supercomputer in Japan, which has ranked among the world’s fastest. Its architecture is not based on x86, but on ARM.

The Fugaku in Japan, one of the world’s fastest supercomputers, runs on processors based on ARM architecture. In a datacenter with tens of thousands of CPUs, the lower power draw of ARM translates directly into millions of dollars in saved electricity and cooling costs.

– Exxact Corporation, ARM in HPC analysis

This is the ARM value proposition scaled to its ultimate conclusion. At the supercomputing level, power and cooling are not trivial concerns; they are multi-million dollar operational costs. ARM’s ability to deliver immense computational throughput within a smaller power envelope is a game-changing economic advantage. It allows for denser racks, lower electricity bills, and a reduced physical footprint. The victory of ARM in the HPC space is the clearest evidence that the future of computing, from the smallest phone to the largest supercomputer, will be dictated by efficiency.

Xbox Cloud vs Stadia Tech: Which Streaming Architecture Wins?

The battle for game streaming supremacy, once waged by giants like Microsoft’s Xbox Cloud Gaming and Google’s Stadia, offers a fascinating microcosm of the larger ARM vs. x86 conflict. These services essentially run games in a data center and stream the video output to the player. The choice of server hardware architecture has profound implications for cost, scalability, and energy efficiency. Xbox Cloud Gaming famously uses custom x86-based hardware that mirrors the architecture of its home consoles, ensuring perfect compatibility.

This approach, while effective for compatibility, inherits the power and thermal characteristics of the x86 platform. Running thousands of these high-power server blades generates immense heat and consumes vast amounts of electricity. While Google Stadia’s path was different (using custom x86 AMD GPUs), the underlying trend in the cloud is moving in another direction entirely. The very companies that provide the backbone for these streaming services are increasingly betting on ARM.

The economic logic is undeniable. As major cloud providers seek to optimize their massive data centers, the performance-per-watt and density advantages of ARM become a powerful driver for change. Lower power consumption per instance means more customers can be served from the same physical and electrical footprint, directly boosting profitability.

Microsoft Azure, Amazon AWS, and Google have turned towards using ARM-based processors in their cloud-native computing. As cloud providers look to increase the energy efficiency of their data centers, ARM processors are likely to become an increasingly popular choice for powering cloud instances.

– Exxact Corporation

Therefore, while a specific service like Xbox Cloud may currently run on x86, the foundational layer of the cloud itself is migrating. The future of cloud gaming and other large-scale streaming services will inevitably be influenced by this tectonic shift. The architecture that wins will be the one that provides the most compute power for the lowest operational cost, and on that front, ARM is building an almost insurmountable lead in the data center.

Key Takeaways

- The ARM vs. x86 debate has shifted from “power vs. efficiency” to a contest of integrated, system-level design, where ARM’s SoC philosophy excels.

- Performance-per-watt is now the most critical metric, driving ARM’s adoption from mobile devices to the world’s fastest supercomputers and largest data centers.

- x86’s legacy of high-power, brute-force performance creates inherent challenges in thermal management and power delivery that require complex and costly external solutions, whereas ARM integrates these functions efficiently.

When Will Quantum Computers Break Current Encryption Standards?

The threat of quantum computers breaking today’s encryption standards is a long-term, existential risk for digital security. While the exact timeline is debated, the prospect has spurred a new focus on building more resilient systems. This is not just about developing new “quantum-proof” algorithms, but also about hardening our current computing architectures against all forms of attack. In this domain of architectural security, the design philosophies of ARM and x86 once again present a stark contrast.

The x86 architecture’s long history and complexity have resulted in a large surface area for security vulnerabilities. Many critical security exploits, such as buffer overflows and return-oriented programming (ROP) attacks, target fundamental aspects of how x86 systems manage memory. Security on this platform has often been a reactive process of software patches and mitigations layered on top of the hardware.

ARM has taken a different, more proactive approach by building security features directly into the silicon at the instruction-set level. This represents a fundamental shift towards making entire classes of attacks impossible by design, rather than just difficult to execute. Features like Pointer Authentication Code (PAC) and Branch Target Identification (BTI) are not software add-ons; they are part of the processor’s core logic.

ARM’s approach of integrating security into the processor’s core instruction flow with features like Pointer Authentication (PAC) and Branch Target Identification (BTI) provides fundamental protection against entire classes of memory corruption attacks that have plagued x86 systems for years.

– ARM architecture security analysis, Red Hat

While this doesn’t solve the future quantum threat, it demonstrates a forward-thinking security posture that is winning favor today. This silicon-level hardening, combined with the efficiency and performance gains, is fueling ARM’s expansion into markets once dominated by x86, including the PC market. Industry projections indicate that ARM’s share in Windows devices may pass 20% by 2025 and could exceed 40% by 2029. The market is voting not just for efficiency, but for a more fundamentally secure architecture.

Ultimately, the evidence points toward a future where computing is defined not by the legacy of brute-force power, but by the intelligence of integrated design. To thrive in this new era, re-evaluating technology choices through the lens of system-level efficiency is no longer optional—it is essential.