The sci-fi vision of tiny robots instantly curing disease is compelling, but the true revolution in nanomedicine is happening now by solving fundamental biological puzzles.

- Effective treatment isn’t just about shrinking technology; it’s about designing nanoparticles that can intelligently navigate, target, and safely exit the human body.

- Success depends on creating “stealth” coatings to evade the immune system and using materials like graphene that are biocompatible with sensitive tissues like the brain.

Recommendation: To understand the future of medicine, focus on the ingenious material science and biological strategies that make these “smart” devices possible, not just their futuristic potential.

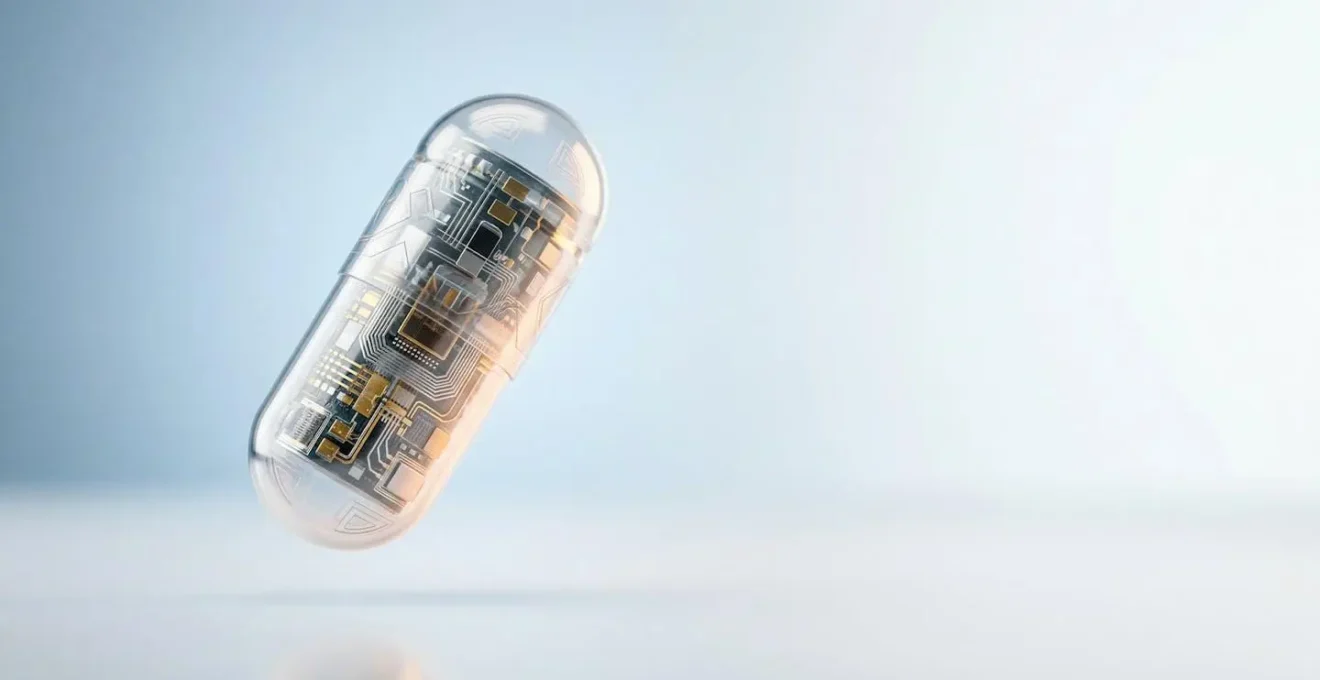

The idea of swallowing a tiny, computerized pill that can travel through your body, diagnose disease, and even perform microscopic repairs feels like it’s been pulled directly from science fiction. For decades, we’ve been captivated by this vision of a “doctor in a pill.” This promise suggests a future where invasive biopsies and blanket treatments like chemotherapy are replaced by hyper-precise, non-invasive interventions. We imagine nanobots as miniature submarines navigating our bloodstream on a mission.

However, the reality is both more complex and, in many ways, more fascinating. The biggest hurdles aren’t just about miniaturizing electronics. The true challenge lies at the delicate interface between a synthetic device and the living, breathing chaos of the human body. Our bodies have spent millennia perfecting a defense system designed to identify and destroy foreign invaders. How do you design a diagnostic tool that isn’t immediately targeted by our own immune cells? How do you ensure these microscopic agents reach a specific cancerous tumor and not a healthy organ? And crucially, what happens to them afterward?

But if the central challenge isn’t merely about shrinking technology, what is the real key? The answer lies in a profound shift in perspective: we must learn to speak the body’s language. The future of nanomedicine is being built not by brute-force engineering, but through clever biological mimicry and advanced material science. It’s a story of creating “stealth” cloaks for nanoparticles, designing materials that dissolve harmlessly, and choosing components that the body accepts as its own.

This article will guide you through the real-world puzzles that nanomedicine researchers are solving today. We will explore the ingenious strategies used to make these smart devices effective and safe, moving beyond the hype to reveal the incredible science that is paving the way for the next generation of diagnostics and treatment.

Summary: The Engineering Puzzles Behind Diagnostic Nanomedicine

- Why Nanobots Can Target Cancer Cells Without Harming Healthy Tissue?

- How to Ensure Nanoparticles Don’t Build Up in the Body?

- Graphene vs Silicon: Which Is Safer for Brain Interfaces?

- The Surface Coating Trick That Hides Nanobots from White Blood Cells

- When Will Nano-Repair of Nerve Damage Be Available in Hospitals?

- When Can We Expect a Fully Simulated Human Organ for Testing?

- Why Skin Rashes Are Hard to Diagnose via Smartphone Cameras?

- How Big Data Is Reducing Drug Discovery Timelines by Years?

Why Nanobots Can Target Cancer Cells Without Harming Healthy Tissue?

The primary promise of nanomedicine, especially in oncology, is precision. The goal is to deliver a potent drug payload directly to a tumor, sparing the healthy cells that are often damaged by systemic treatments like chemotherapy. The initial concept for this, known as passive targeting, relies on a phenomenon called the Enhanced Permeability and Retention (EPR) effect. In theory, the leaky blood vessels surrounding a tumor allow nanoparticles to seep in and get trapped. However, the real-world effectiveness of this is surprisingly low, with some studies indicating that as little as 0.7% of the administered dose reaches the tumor this way. This is simply not efficient enough for a reliable treatment.

This is where active targeting comes in. It’s the difference between floating down a river hoping to wash ashore at the right spot, and having a motor and a GPS. To achieve this, we equip the surface of the nanoparticle with “homing molecules,” typically ligands or antibodies. These molecules are specifically chosen because they bind to unique proteins, or receptors, that are overexpressed on the surface of cancer cells but are rare on healthy cells. Think of it as a key (the ligand on the nanobot) fitting into a specific lock (the receptor on the cancer cell).

When the nanoparticle circulates in the bloodstream and passes by the tumor, these molecular “keys” find their “locks” and bind tightly. This anchors the nanoparticle directly to its target, allowing it to release its diagnostic or therapeutic payload with far greater accuracy. This strategy dramatically increases the concentration of the drug where it’s needed most, while minimizing its exposure to the rest of the body. It’s this biological intelligence, not just small size, that allows nanobots to differentiate friend from foe.

How to Ensure Nanoparticles Don’t Build Up in the Body?

A critical question for any material we introduce into the body is: what happens to it afterward? The prospect of synthetic particles accumulating in organs like the liver, spleen, or kidneys is a major safety concern. A successful nanodevice must not only perform its function but also be safely eliminated. A permanent resident is not an option. This has led to a major focus on creating bioresorbable and biodegradable materials.

Instead of using inert materials like gold or silica that persist in the body, researchers are engineering nanoparticles from polymers that the body can naturally break down. Materials like polylactic-co-glycolic acid (PLGA) are designed to degrade over a predictable period into harmless byproducts—lactic acid and glycolic acid—which are easily metabolized and excreted. The rate of degradation can be precisely tuned by adjusting the polymer’s composition, ensuring the nanodevice lasts long enough to do its job before dissolving away.

For non-degradable materials that are necessary for certain functions (like imaging), the key is to control their size, shape, and surface chemistry to facilitate renal clearance. Particles smaller than a certain threshold (typically around 5-6 nanometers) can be filtered out by the kidneys and excreted in urine. In fact, research shows impressive clearance rates, with some iron oxide nanoparticles cleared by the kidney within 48 hours. By designing for degradation or excretion from the very beginning, we can ensure these advanced tools are merely temporary visitors, not permanent squatters.

Action Plan: Designing a Biocompatible Nanodevice

- Material Selection: Choose materials with a known, safe degradation pathway (e.g., PLGA, silk-based polymers) or those with a size and charge that promote renal clearance.

- Surface Chemistry: Engineer the nanoparticle’s surface coating (e.g., with PEG) to reduce protein adhesion and prevent aggregation, which can hinder clearance.

- Degradation Profiling: Conduct in-vitro and in-vivo tests to precisely map the material’s degradation timeline and identify all resulting byproducts.

- Biodistribution Studies: Use imaging techniques to track where the nanoparticles travel and accumulate in the body over time, confirming they are cleared from vital organs.

- Excretion Pathway Analysis: Verify the primary route of elimination (e.g., renal or hepatobiliary) to ensure the particles are being effectively removed from the system.

Graphene vs Silicon: Which Is Safer for Brain Interfaces?

When we move from the bloodstream to the most sensitive organ of all—the brain—the rules of engagement become even stricter. Brain-computer interfaces (BCIs) hold immense promise for restoring function to those with paralysis or neurological disorders. For decades, the go-to material for the electrodes in these devices has been silicon. It’s a well-understood semiconductor, the foundation of modern electronics. However, in the delicate, soft environment of the brain, silicon has major drawbacks.

Silicon is rigid and brittle. The brain, on the other hand, is soft and dynamic. This mechanical mismatch can lead to chronic inflammation and the formation of scar tissue around the implant. This glial scarring effectively insulates the electrode, degrading the signal quality over time and ultimately causing the device to fail. The body is actively trying to wall off the rigid, foreign object. Furthermore, traditional silicon-based manufacturing has limitations in creating electrodes at the micro-scale needed to interface with individual neurons without causing significant damage.

This is where graphene, a single layer of carbon atoms arranged in a honeycomb lattice, emerges as a game-changing alternative. Graphene is incredibly strong yet astoundingly flexible, thin, and biocompatible. Its flexibility allows it to conform to the brain’s soft, curved surfaces, minimizing the mechanical stress that leads to scarring. Its excellent conductivity and thinness enable the creation of ultra-small, transparent, and highly sensitive electrodes that can record neural signals with unprecedented fidelity. Graphene’s carbon-based nature also makes it more “biologically friendly” than silicon, provoking a much milder immune response.

Case Study: The World’s First Graphene-Based Brain-Computer Interface

Highlighting the potential of this material, INBRAIN Neuroelectronics successfully performed the world’s first human procedure with a graphene-based cortical interface in 2024. During a brain tumor resection, the device was able to differentiate between cancerous and healthy tissue with micrometer-scale precision. This landmark achievement, detailed in a report from the University of Manchester, not only demonstrated the superior signal quality of graphene but also confirmed its safety in a clinical setting, paving the way for next-generation neuroelectronic therapies.

The Surface Coating Trick That Hides Nanobots from White Blood Cells

The human immune system is a phenomenally effective surveillance network. Specialized cells, like macrophages (a type of white blood cell), constantly patrol the body, engulfing and destroying anything they don’t recognize as “self.” For a nanobot, this is the ultimate threat. No matter how brilliant its design, if it’s immediately identified as foreign and eliminated, it’s useless. The solution to this puzzle is not to fight the immune system, but to trick it with a form of biological camouflage.

The most common and effective “stealth” coating is a polymer called polyethylene glycol, or PEG. When nanoparticles are coated in a dense brush of PEG chains, it creates a “hydrated” layer around them. This layer of water molecules effectively masks the nanoparticle’s true surface, physically preventing the immune system’s proteins from latching on and flagging it for destruction. This process, known as PEGylation, significantly increases the circulation time of nanodevices in the bloodstream from mere minutes to many hours, giving them ample time to reach their target.

A more advanced and even cleverer trick involves cloaking the nanoparticle in the membrane of one of the body’s own cells. For instance, by wrapping a nanobot in the membrane of a red blood cell, it essentially wears an invisibility cloak that makes it indistinguishable from the billions of other red blood cells. An even more sophisticated approach uses cancer cell membranes. This not only provides camouflage but can also help the nanoparticle home in on other, similar cancer cells. This is achieved by leveraging specific signals on the cell surface, such as the CD47 protein, which acts as a universal “don’t eat me” signal to the immune system. As one research team elegantly explains:

CD47, a ‘marker-of-self’ protein that is highly overexpression on the cytomembrane of CRC cells, has been shown to protect cancer cells from macrophage phagocytosis by transmitting a ‘don’t eat me’ signal through the SIRPα receptor.

– Research team from Signal Transduction and Targeted Therapy, Genetically programmable cell membrane-camouflaged nanoparticles study

When Will Nano-Repair of Nerve Damage Be Available in Hospitals?

The potential to repair damaged nerves using nanotechnology is one of the most exciting frontiers in medicine, offering hope for conditions ranging from spinal cord injury to peripheral neuropathy. The core idea is to create nanoscale scaffolds or deliver growth-promoting factors that guide regenerating nerve fibers (axons) across the site of an injury, effectively bridging the gap. While the science is incredibly promising, the path to widespread hospital availability is long and paved with rigorous testing.

Currently, most of this research is in the preclinical stage, meaning it is being tested in cell cultures and animal models. Scientists have successfully used self-assembling peptide nanofibers to create a scaffold that supports neural regeneration in rats with spinal cord injuries, leading to significant functional recovery. Others are using nanoparticles to deliver neurotrophic factors, the proteins that stimulate nerve growth, directly to the injury site. These approaches have shown remarkable success in the lab.

However, the transition from a lab animal to a human patient is a monumental leap. The human nervous system is orders of magnitude more complex, and the scale of injuries is often much larger. Before any nano-repair technique can be approved, it must go through multiple phases of human clinical trials to prove both its safety and its efficacy. Phase I trials focus solely on safety in a small group of healthy volunteers or patients. Phase II expands to a larger patient group to get a preliminary sense of effectiveness, and Phase III involves a large-scale, randomized trial to definitively prove it works better than existing treatments. This process routinely takes 5 to 10 years, if not longer. A realistic timeline would place the first approved nano-repair therapies as an option in specialized centers within the next decade, with broader availability following after that.

When Can We Expect a Fully Simulated Human Organ for Testing?

The development of any new drug or nanomedical device relies on testing, but traditional models have significant limitations. Animal testing is slow, expensive, and often fails to predict human responses accurately. This is where the concept of a “virtual” or fully simulated human organ comes in—a computational model so sophisticated it can accurately replicate the organ’s complex biology, physiology, and response to chemical compounds. This is the ultimate goal of the “in silico” (computer-based) testing revolution.

We are not there yet, but the progress is tangible. The “organ-on-a-chip” is a crucial stepping stone. These are microfluidic devices, about the size of a USB stick, that are lined with living human cells from a specific organ (like the lung, liver, or heart). They are designed to mimic the organ’s structure and function on a micro-scale. We can pump nutrients through tiny channels to simulate blood flow and test how the cells react to a new drug or nanoparticle in real-time. This technology is already helping to reduce reliance on animal testing and providing more accurate data on human toxicity.

The next step is to integrate these individual “organs-on-chips” into a “human-on-a-chip” system, simulating how different organs interact. The ultimate ambition, a fully simulated digital twin of an organ, requires immense computational power and a far deeper understanding of cellular biology than we currently possess. We need to model trillions of cells and their countless interactions. While research projects like the Human Brain Project are making strides in this direction, a fully predictive, simulated organ for routine drug testing is likely still 15-20 years away. In the meantime, organs-on-chips represent the most powerful simulation tool we have.

Why Skin Rashes Are Hard to Diagnose via Smartphone Cameras?

In an age where our smartphones are powerful computers, it seems intuitive that we could simply take a picture of a skin rash and get an instant, accurate diagnosis from an AI. While many apps attempt this, the reality is that visual diagnosis of dermatological conditions is fraught with complexity, and a simple photo is often not enough information. The limitations of this approach highlight exactly why internal, nanotech-based diagnostics are so revolutionary.

First, lighting conditions dramatically alter the appearance of a rash. The color, texture, and perceived inflammation can look completely different under the warm, yellow light of a bathroom compared to cool, natural daylight. Smartphone cameras and flashes can wash out subtle details or create misleading shadows. Second, a single 2D image cannot capture crucial diagnostic information like the rash’s texture (is it scaly, bumpy, or smooth?), its depth, or whether it blanches (whitens) when pressed. These are tactile clues a dermatologist relies on.

Most importantly, many different skin conditions can look remarkably similar. A specific type of eczema might be visually indistinguishable from a fungal infection or an allergic reaction in its early stages. The diagnosis often depends on the patient’s history, other symptoms, and sometimes, a biopsy to look at the cells. An AI trained on images can only spot patterns; it cannot understand the underlying biological cause. This is the fundamental limit of external observation. It’s like trying to diagnose an engine problem by only looking at the car’s paint job. Smart pills and nanobots, by contrast, are designed to go inside and measure the actual biological markers of disease directly at the source, providing objective data rather than a subjective interpretation of a photo.

Key Takeaways

- Active targeting, which uses molecular “keys” to find cellular “locks,” is vastly superior to passive accumulation for delivering drugs to specific tissues like tumors.

- The safety of nanodevices hinges on biocompatibility, achieved through bioresorbable materials that dissolve harmlessly or by designing particles small enough for renal clearance.

- For sensitive neural interfaces, flexible and biocompatible materials like graphene show far greater promise than rigid silicon, which can cause chronic inflammation and scarring.

How Big Data Is Reducing Drug Discovery Timelines by Years?

The process of discovering, developing, and getting a new drug to market is notoriously long and expensive, often taking over a decade and costing billions of dollars. Each step, from identifying a potential molecular target to running massive clinical trials, is a huge undertaking. However, the convergence of genomics, computational biology, and artificial intelligence—collectively falling under the umbrella of Big Data—is fundamentally reshaping this landscape and dramatically accelerating the timeline.

Historically, identifying a drug target was a slow, hypothesis-driven process. Today, AI algorithms can analyze vast genomic and proteomic databases from thousands of patients to identify novel biological targets associated with a disease in a matter of weeks. Once a target is known, another AI can screen virtual libraries of billions of chemical compounds to predict which ones are most likely to bind to that target and have the desired effect. This “in silico” screening can replace years of painstaking and often fruitless lab work.

Furthermore, Big Data is optimizing clinical trials. By analyzing electronic health records and genetic data, researchers can identify and recruit the ideal patient populations for a trial much more quickly. Predictive models can help stratify patients to determine who is most likely to respond to a drug, leading to smaller, faster, and more successful trials. Even the design of the nanomedicines we’ve discussed is being accelerated by simulations that predict how different sizes, shapes, and coatings will behave in the body. By replacing slow, physical trial-and-error with rapid, intelligent computation, Big Data is compressing years of research into months, promising to bring safer, more effective treatments to patients faster than ever before.

This convergence of data science and biology is the engine that will drive the nanomedical revolution forward, turning the theoretical puzzles of today into the standard treatments of tomorrow.